Data’s Perfect Storm - Processing The Zettabyte

We stand, not at the beginning of the information revolution but on the river bank moments before the banks burst and change the landscape forever. As our far more learned peers will tell us the future is like the Chaos theory; every development, every variation, every idea has the potential to change where the river bank breaks and what change it will bring with it. In this article I have selected some of the biggest topics in our virtual river - Big Data, IPv6 and The Internet of Things. These areas are intrinsically linked and are symbiotic to the increase in information. But this is not the problem in my eyes, the problem or the perfect storm is the hardware we use to handle and interpret data is no longer fit for purpose.

Let us start with IPv6 and understand why I have come to this controversial conclusion; in a standard IPv4 IP communication our home routers send request to our ISP (or other) DNS. The DNS servers then queries all the possible locations your data could be sent to. In v4 this is 255.255.255.255 or roughly 4.3 billion locations, expand this to the capability of IPv6 and we find ourselves filtering through a hexadecimal list of undecillion of possible locations. The amount of processing power needed is exponentially higher, in fact so much higher that ISP's are not able to process a single IPv6 address and are instead allocating huge chunks of IPs to individual customers. So whilst we have a huge amount more IP's available we cannot use anything like the intended quantity. Why? Simply because the hardware is not capable.

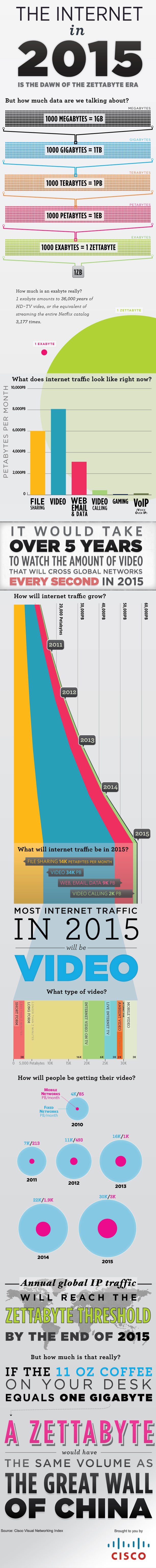

So this brings us on to the internet of things, for those still struggling with the ever increasing terminology this refers to the communication over the internet of one machine to another without human interaction. This allows for a huge array of possibilities like your fridge ordering more milk when it senses you are running low or even more intelligently notices an out of the ordinary milk consumption and an increase in the amount of people using the front door. Clever stuff! However, only if we have enough IPs. In this topic what I want to consider is how we make sense of this data? If we need to programme and process all of this data we need servers and power. Looking at the info graphics prepared by organisations like Cisco we see the data now recorded in 2013 will exceed all the data ever collected since the beginning of time. I imagine this will double next year.

The infographic below introduces the Zettabyte a unit so large that I have to explain it to every person I mention it to. So processing, storing and making sense of this data is going to require a huge revolution in hardware capability and more than that a huge reduction in the cost of this hardware. Failure to achieve this will limit the successful implementation and usefulness of advances such as the Internet of Things and leave the information revolution adrift awaiting rescue.